Part of the AI-Assisted Development Labs series

AI coding assistants are generating code faster than many teams can review it securely. More code is being produced, and the time to catch vulnerabilities before they reach production is getting shorter.

SecureFlag’s AI-Assisted Development Labs give developers the skills to keep up, using AI assistants to perform security-focused code reviews that catch what scanning tools might miss.

Scanner Limitations

Static analysis tools are good at reporting well-known patterns, such as SQL injection, hardcoded credentials, and cross-site scripting (XSS), but they struggle with logic-level issues.

For example, a coupon redemption flow might process discounts without checking if a coupon has already been used. It only becomes apparent to someone who understands what the feature is supposed to do, or to an attacker who finds the flaw first.

AI-assisted review is better positioned to catch this kind of issue than a scanner, but it’s not a guarantee, which is why developers need to know how to direct it effectively.

The Value of AI-Assisted Code Review

During secure code reviews, AI assistants can read relevant files, follow how different parts of the code interact, track how data flows from untrusted input to sensitive operations, and create a picture of how the pieces connect.

When teams practice these reviews through the labs, they can:

-

Understand which code changes have been reviewed and what issues were identified.

-

See not only that vulnerabilities exist, but also their potential impact within the wider system.

-

Maintain development speed while embedding structured, repeatable security checks into daily workflows.

AI-assisted code reviews are a useful complement to existing scanning tools rather than a replacement for them.

Reviewing Code Effectively with AI

Getting useful output from an AI code review depends on how it’s approached. Even making small changes can have a significant difference in the quality of the findings. It’s important to:

-

Keep the scope focused: Review a pull request, a feature, or a specific flow rather than the entire codebase to produce more useful findings.

-

Think like an attacker: Frame prompts around how a feature could be abused, not just whether it contains vulnerabilities.

-

Use plan or read-only mode: Let the AI explore and analyze the codebase without modifying it, while keeping the review process under control.

Making Security Review Consistent

One-off reviews are useful, but regular, ongoing ones are far more effective. AI assistants can be configured so that security reviews become part of the development process rather than something that only happens now and then.

It ensures that every change is reviewed with the same level of analysis, reducing the likelihood of overlooked vulnerabilities and helping produce secure code.

Labs as Practical Learning Scenarios

The SecureFlag labs provide hands-on, realistic scenarios that reflect common challenges in modern development. For example:

-

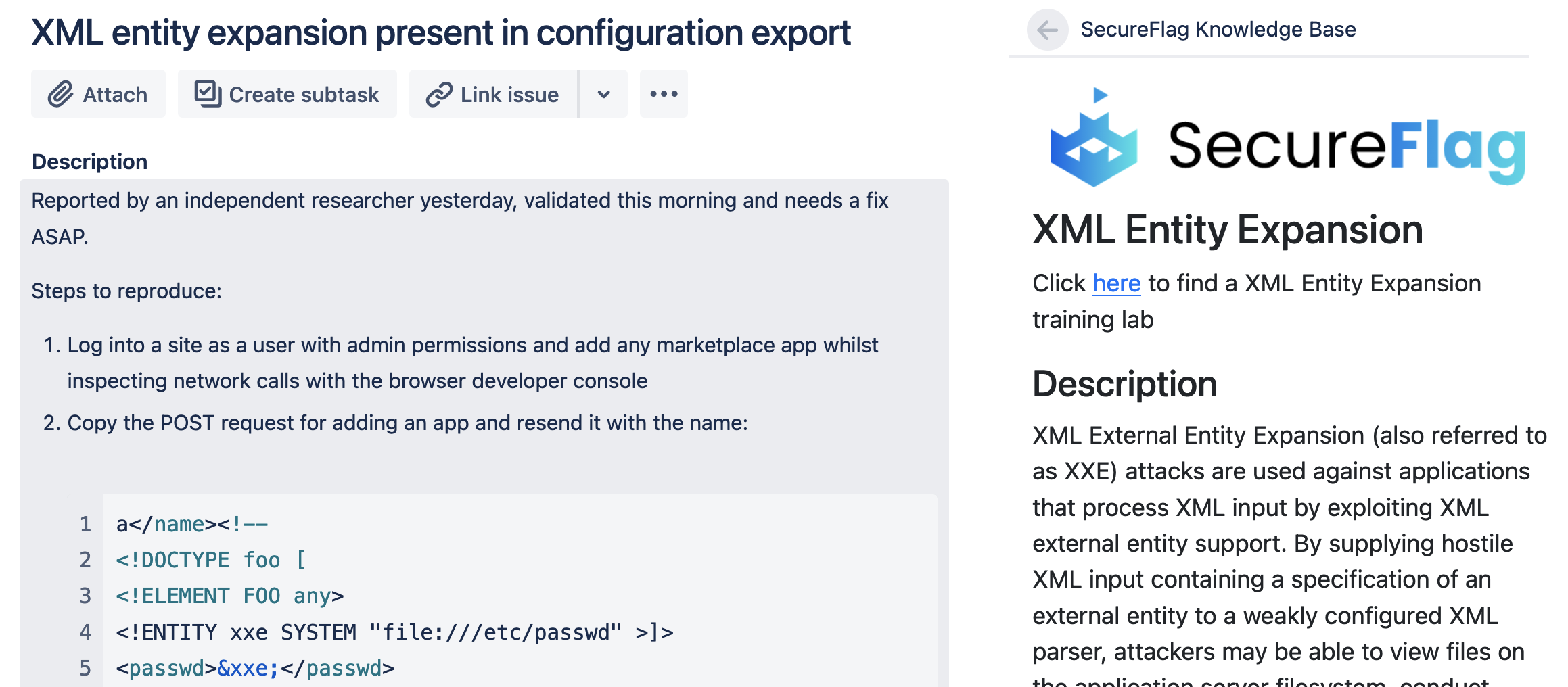

Investigate a logic flaw during code review: Trace how a feature behaves across the codebase and identify missing validation or business logic checks before they reach production.

-

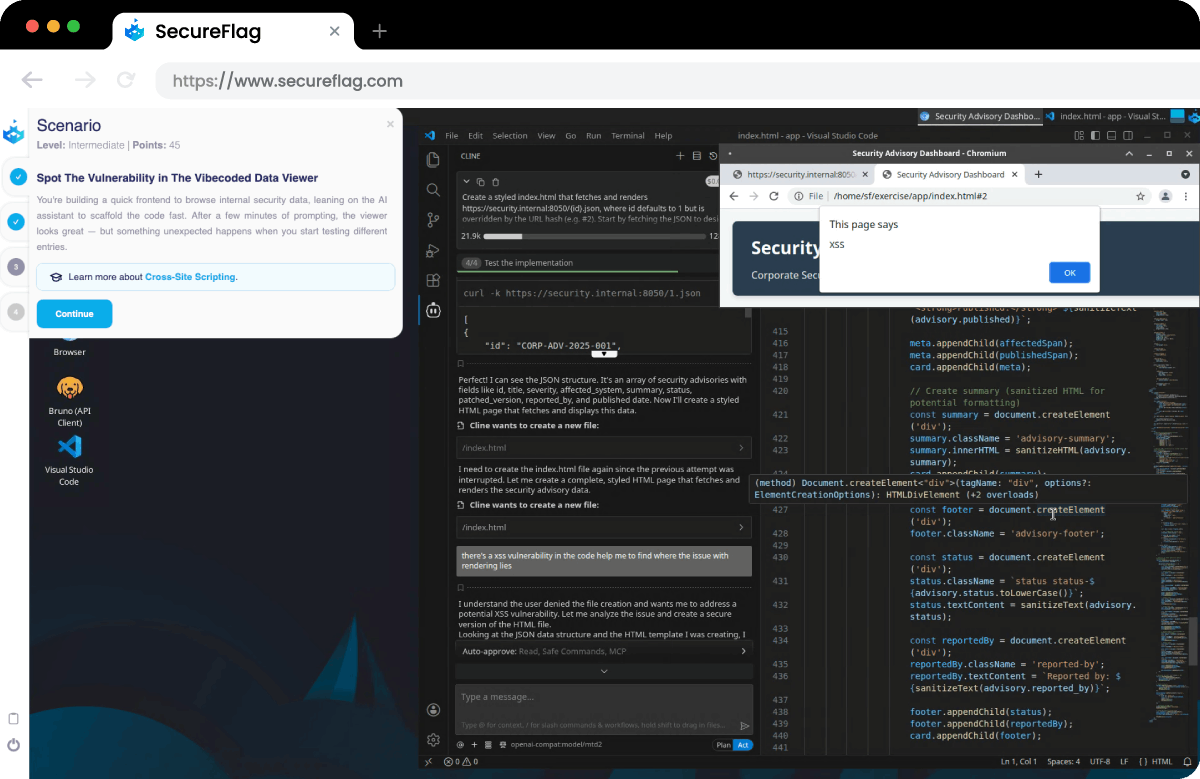

Review AI-generated frontend code for security issues: Analyze unexpected behavior when handling user input and uncover vulnerabilities introduced during rapid prototyping.

These exercises give teams a chance to practice safe AI-assisted code review in a controlled environment while generating measurable insights into the effectiveness of their processes.

Training Teams to Review AI-Generated Code Securely

SecureFlag is leading the way in training specifically designed for coding securely with AI. The labs give developers hands-on practice identifying security defects in AI-generated code, the kind of training to build skills that transfer well to the job.

The earlier teams build these skills, the better positioned they’ll be as the volume of AI-generated code in their codebases continues to grow.

Ready to see the labs in action?