Between the cookie pop-ups on websites, opt-out options on forms, and registration screens, users of all kinds of websites, online stores, and social media sites are becoming more aware of the use of their personal data. In fact, a 2019 survey from the Pew Research Center found that 7 out of 10 Americans feel their personal information is less secure than it was in 2014. It’s clear that websites and businesses are becoming more transparent about data collection and use on the internet, but is it enough to be transparent? Below, we go through some other considerations businesses should address and how to leverage threat modeling for privacy.

The Importance of Privacy in Modern Software

Moral obligations

When handling customer data, as an organization, you are being trusted with personal data that could lead to a person being identified or compromised due to mishandling it.

Consumer awareness

As mentioned earlier, consumers and customers are more aware than ever of how their data is being collected, utilized, and potentially sold to third-party vendors for marketing and tracking purposes. This has been heightened by more blatant pop-ups when using a website, and mobile Operating Systems blocking app permissions.

Reputation of organization

No business wants to ever suffer a data breach or be accused of being negligent in handling personal data. The stakes of this to a reputation alone can be high, where potential customers may feel discouraged from utilizing your services in the future, or existing customers may cancel their use of their service or, at worst, hold your company legally responsible for any damages they suffer as a result.

Privacy Regulations

Many regions and countries have implemented strict data protection laws, and adherence to these regulations is a legal requirement and essential for avoiding severe penalties and legal consequences. Most readers will see the EU’s General Data Protection Regulation (GDPR) as the most prominent example of Data Protection law, as this places obligations on organizations handling EU citizens’ data or risks facing a hefty fine.

But it’s not only in the EU that we see this kind of law; in the UK, there is the Data Protection Act which outlines rules called ‘data protection principles’. They must make sure the information is: used fairly, lawfully and transparently. In the USA, the California Consumer Privacy Act (CCPA) and California Privacy Rights Act (CPRA) in the State of California grant rights to California residents to allow them to know what data is being collected and the right to opt-out of the sale of their data. The Health Insurance Portability and Accountability Act (HIPAA) is a Federal law that sets standards for protecting Health Information. Likewise, the Gramm-Leach-Bliley Act is a federal law that safeguards the security and confidentiality of customer information, aimed mainly at Banks, Credit Unions, and financial institutions.

What is Privacy Threat Modeling?

Threat modeling for privacy involves a rigorous process of identifying and mitigating potential threats to the privacy of individuals’ personal data within software systems and applications. In traditional threat modeling, the process is concerned with security concerns and threats, whereas privacy threat modeling explicitly addresses risks related to the unauthorized collection, use, disclosure, or misuse of personal information.

The Basics of Privacy Threat Modeling

Privacy threat modeling typically begins with identifying sensitive data elements handled by the system, such as personally identifiable information or sensitive personal data. This includes understanding the types of data collected, the purposes for which it is collected, and the potential impact on individuals if the data were compromised.

Once threats and vulnerabilities are identified, mitigation strategies are developed to address them effectively. This may involve implementing technical controls, such as encryption, anonymization, access controls, and procedural measures, such as privacy policies, data minimization practices, and user consent mechanisms.

Requirements of threat modeling

Throughout the process, privacy threat modeling requires collaboration among stakeholders, including developers, privacy experts, legal professionals, and other relevant parties, to ensure that privacy risks are adequately understood and addressed. By integrating privacy considerations into the software development lifecycle, organizations can build systems that respect individuals’ privacy rights and mitigate the risk of privacy breaches.

Introducing LINDDUN for Effective Privacy Threat Modeling

LINDDUN is a threat modeling framework that is used to model privacy rather than confidentiality; experts at KU Leuven, a university in Belgium, developed it. The name is an acronym that stands for the different threat types it is used to investigate:

- Linking - Refers to associating different data elements with each other in such a way that it leads to undesirable privacy implications.

- Identifying - Expresses situations in a user identity can be learned through leaks, deductions, or inferences when this is unwanted and to be prevented.

- Non-repudiation - Pertains to situations where an individual can no longer deny specific claims.

- Detecting - These are situations where an individual’s involvement, participation, or membership can be deduced through observation.

- Data Disclosure - Is transferring personal data across a boundary, i.e., data collection by the system or data transfer to a known or unknown third party.

- Unawareness - threats occur when individuals are insufficiently informed, involved, or empowered concerning the processing of their personal data.

- Non-compliance - arises when the system deviates from legislation, regulation, standards, and best practices, leading to incomplete risk management.

LINDDUN provides a structured approach for analyzing privacy threats and helps organizations design systems with privacy considerations. It can also be integrated into existing software development processes, such as threat modeling frameworks for security, to enhance privacy considerations. By integrating privacy threat modeling into existing processes, organizations can streamline privacy risk management and ensure consistency with other risk management activities.

Creating Privacy-Focused Threat Models with ThreatCanvas

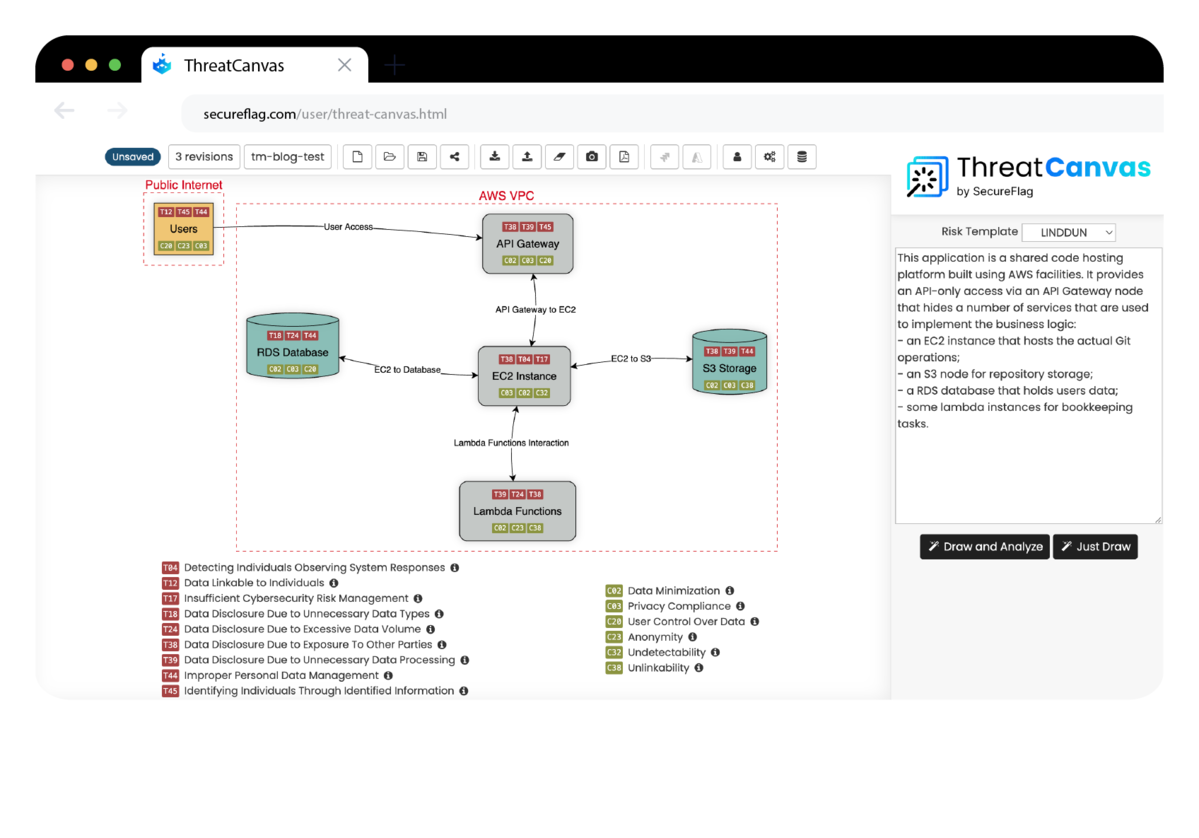

With SecureFlag’s automated Threat Modeling solution, ThreatCanvas, creating and exploring Privacy Threat Models is easier than ever. Using ThreatCanvas, you are able to generate Threat Models by providing either a detailed description or an Infrastructure-as-Code (IaC) template.

Not only does ThreatCanvas draw the threat model from your prompt, but it also performs an analysis on it according to the chosen “Risk Template”. In the below example, we describe an application using a range of AWS services such as EC2, S3, Lambda functions, and an RDS database. With this information, ThreatCanvas can draw a Threat Model and identify the different Threats and Controls.

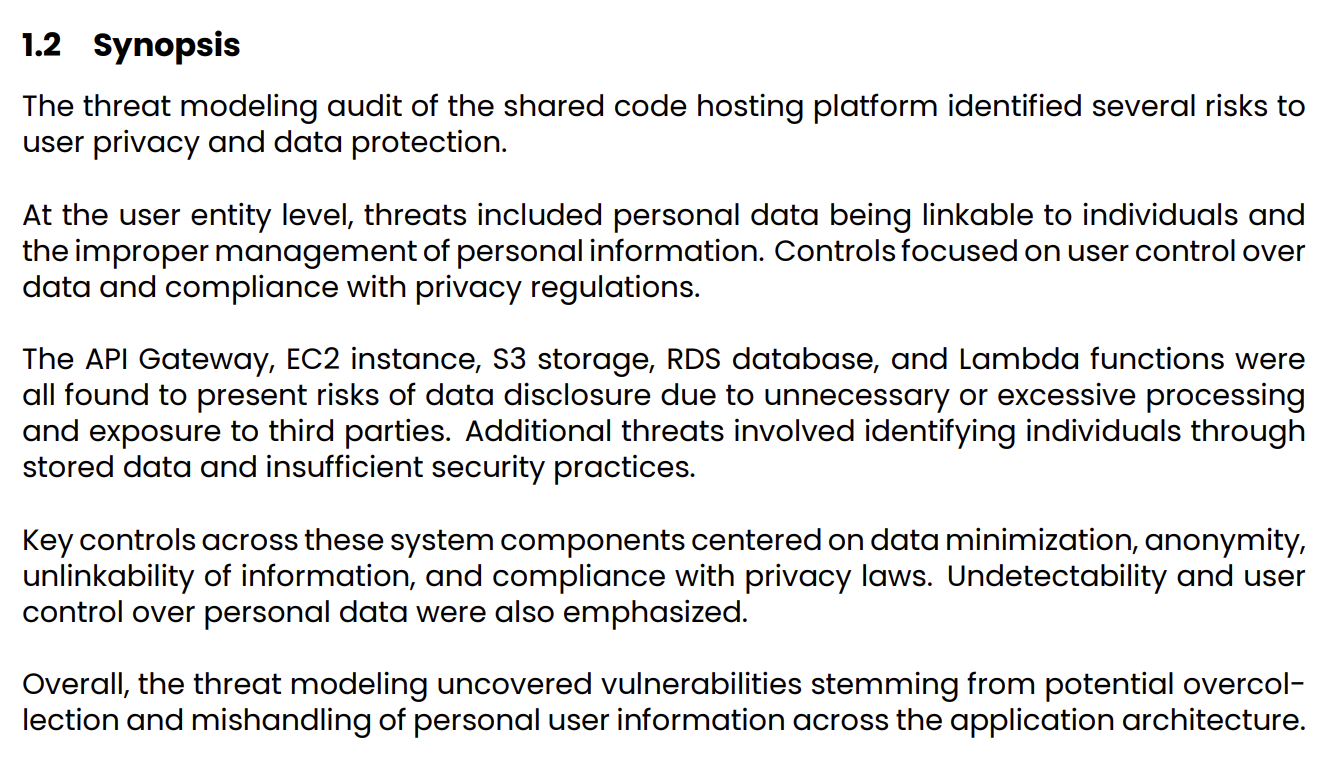

After generating the model, stakeholders at all levels must be able to comprehend what the model represents. That’s why with ThreatCanvas, AI-assisted report generation is built into the tool. Take a look at the example below, which gives a readable synopsis of the described application and the threats it encounters.

To wrap up, it’s important to take note that the heavy emphasis on data privacy and protection isn’t likely to go away anytime in the near or distant future, but the good news is that with TheatCanvas and frameworks such as LINDDUN, the process of identifying and remediating any issues has become substantially easier to tackle! So, contact us today to learn more about ThreatCanvas and SecureFlag and how we can help save your organization time by tackling privacy through threat modeling earlier in the Software Development Life Cycle (SDLC).

Source Pew Research Center, “Americans and Privacy: Concerned, Confused, and Feeling Lack of Control Over Their Personal Information” (November 15, 2019). Available at: How Americans think about privacy and the vulnerability of their personal data.